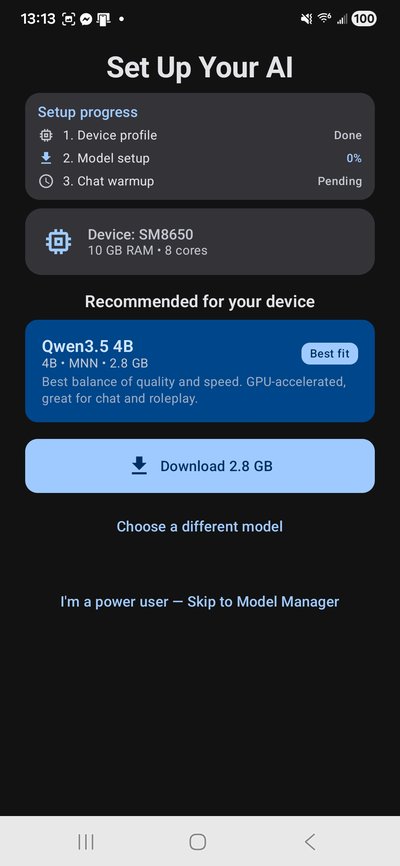

Run full AI models on your phone — fast. Up to 57 tok/s on small models, 23+ on 14B with speculative decoding. Search your documents, hear responses with offline TTS, and your AI remembers you across every conversation. No cloud. No subscription.

Airplane mode on. No Wi-Fi. No data. Still generating.

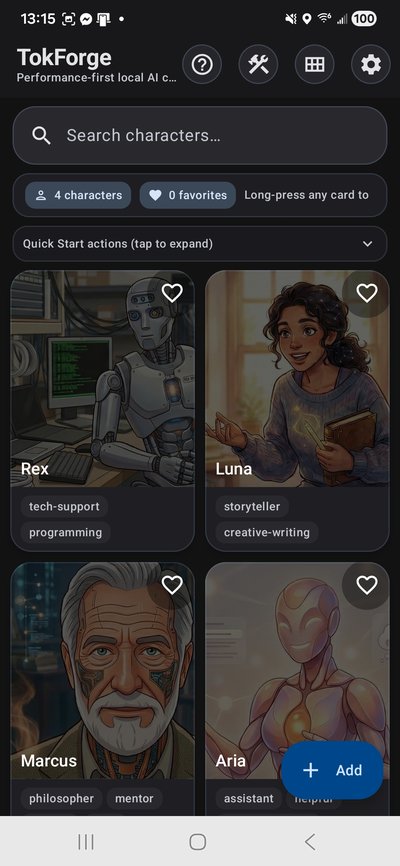

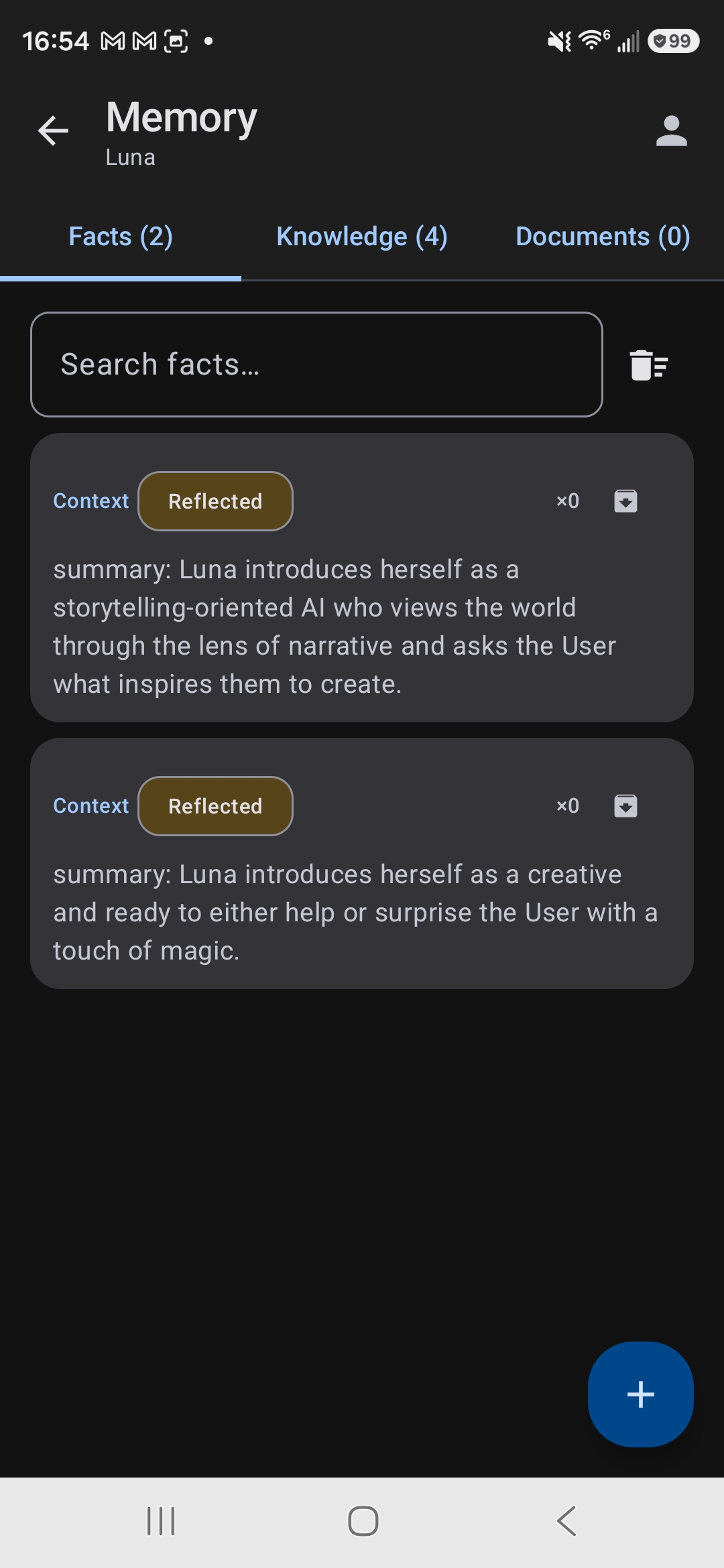

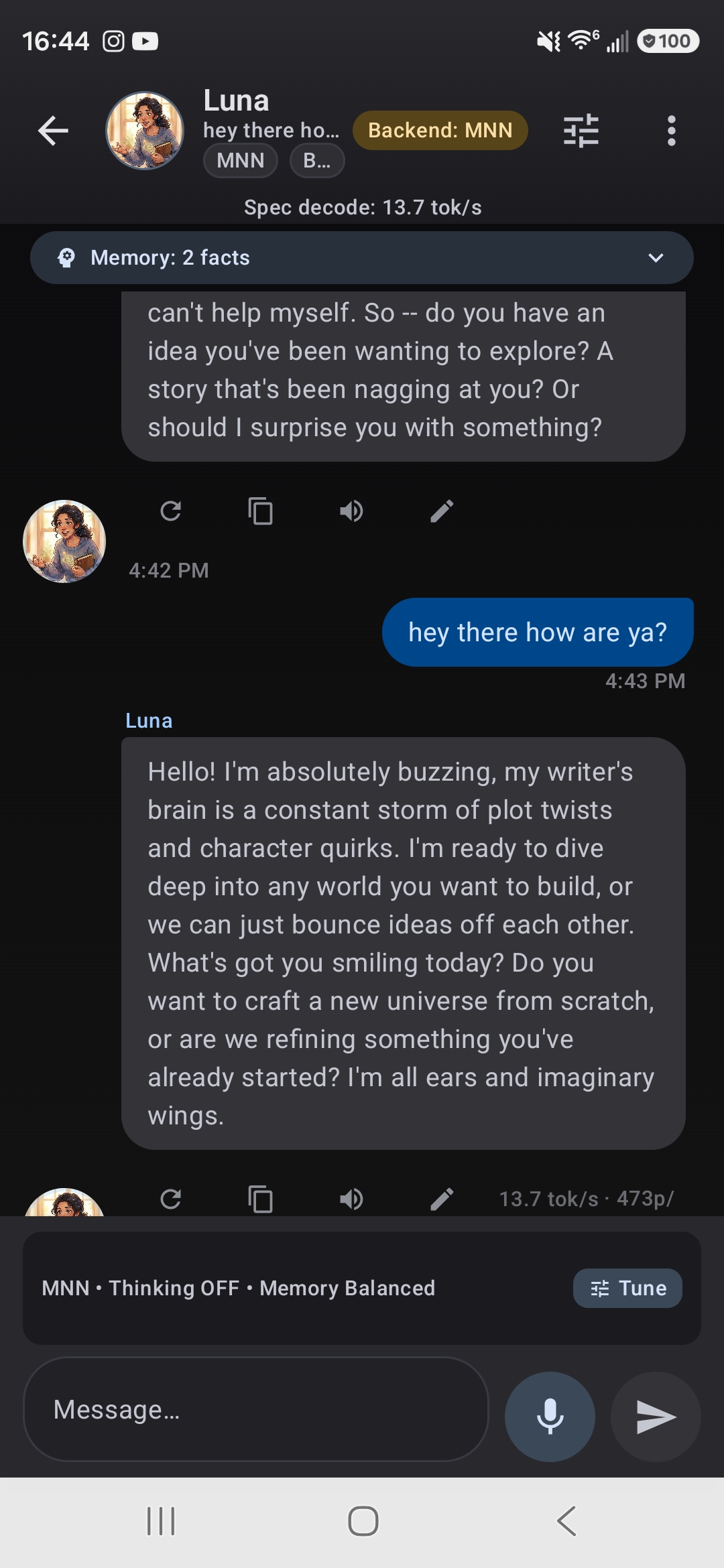

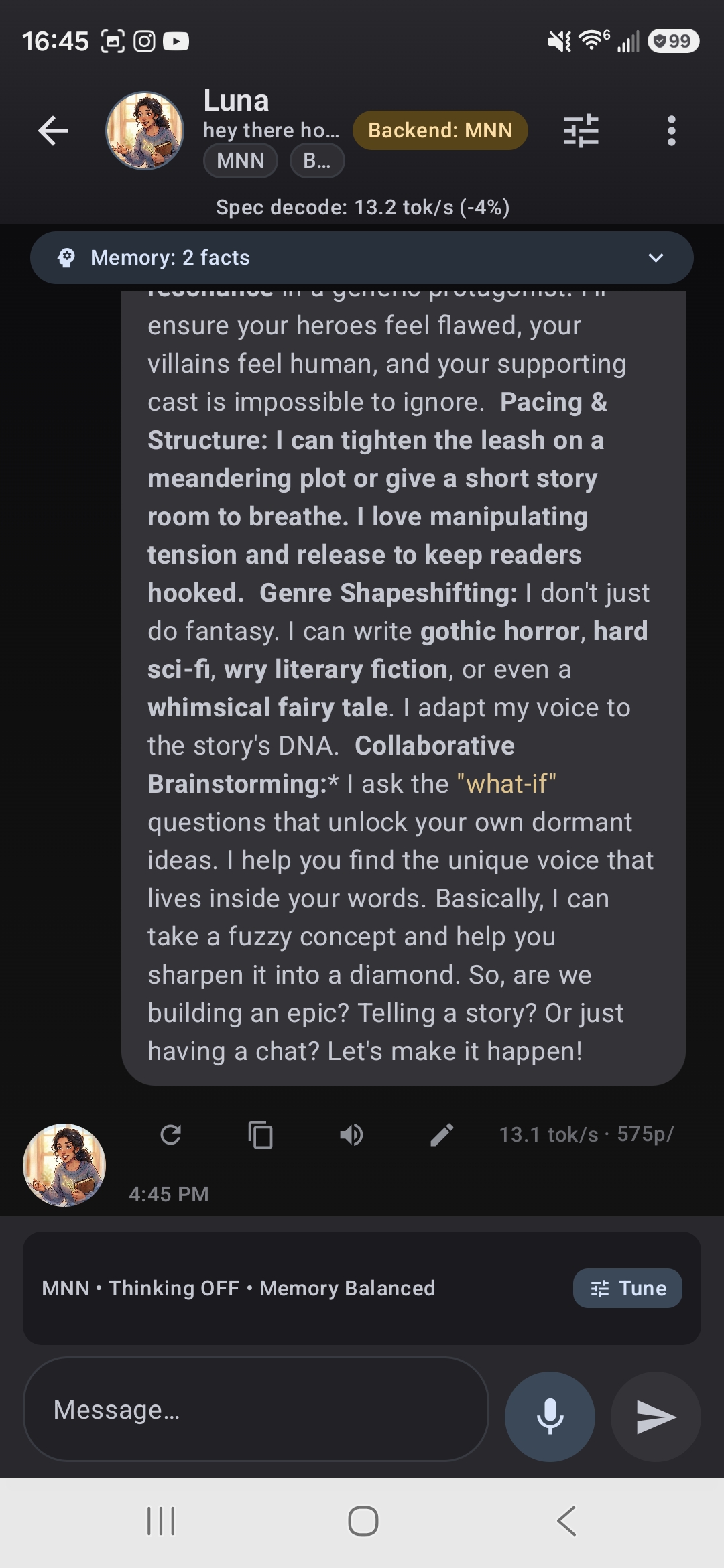

Import character cards with full backstories, alternate greetings, and lore. Each character feels different because each one is.

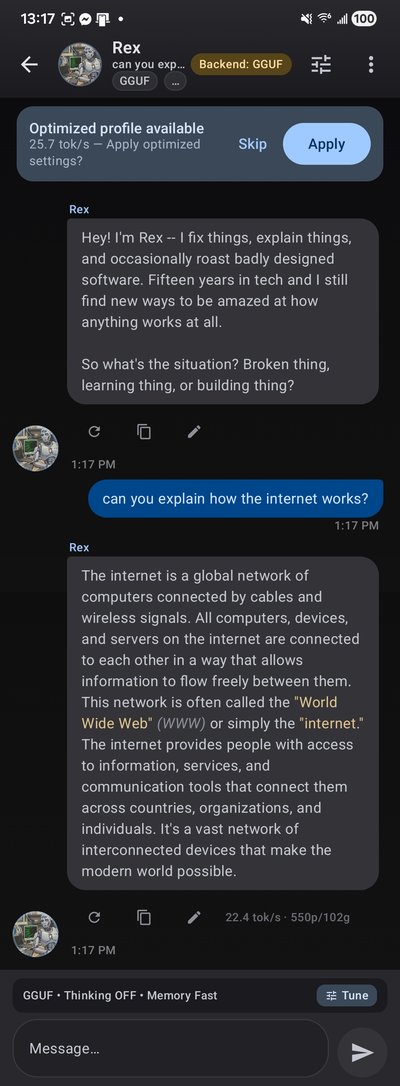

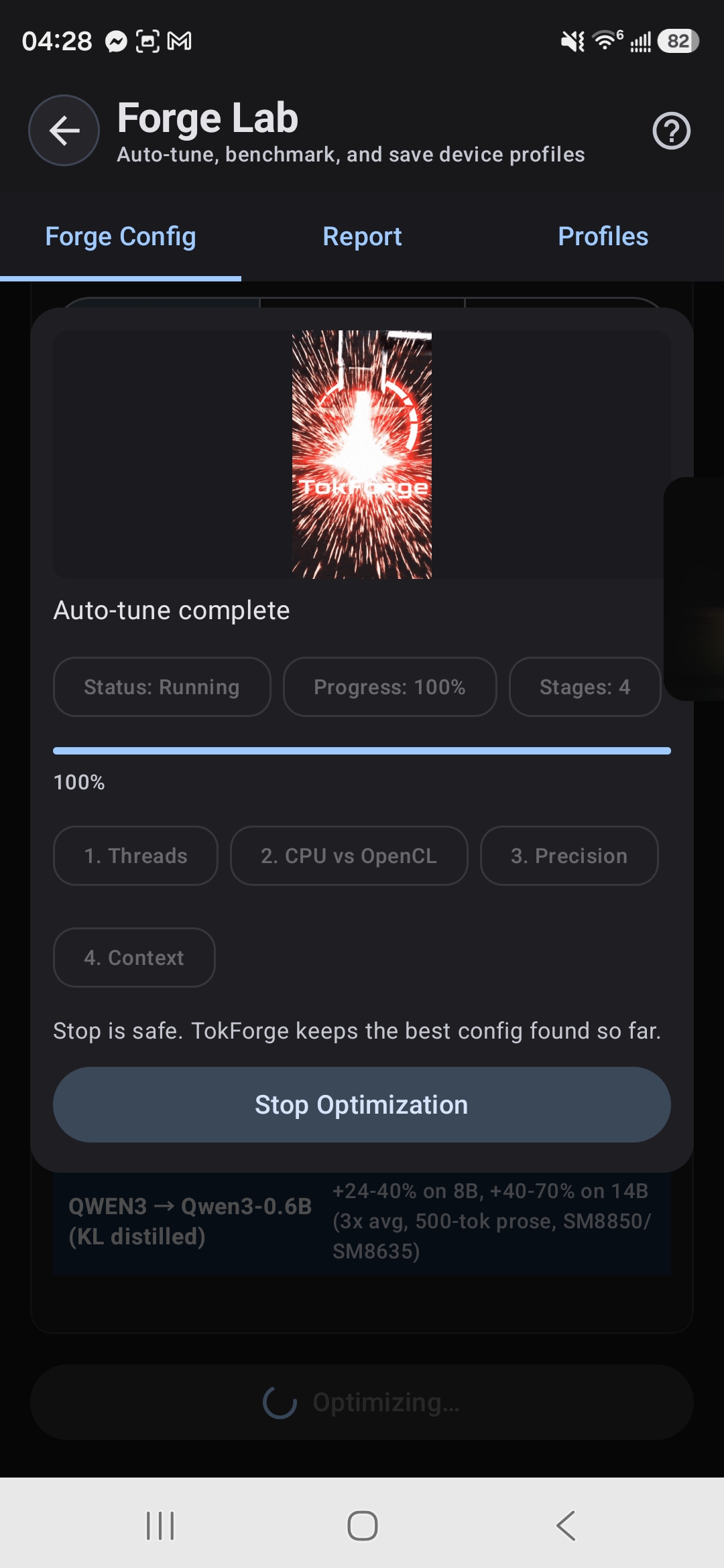

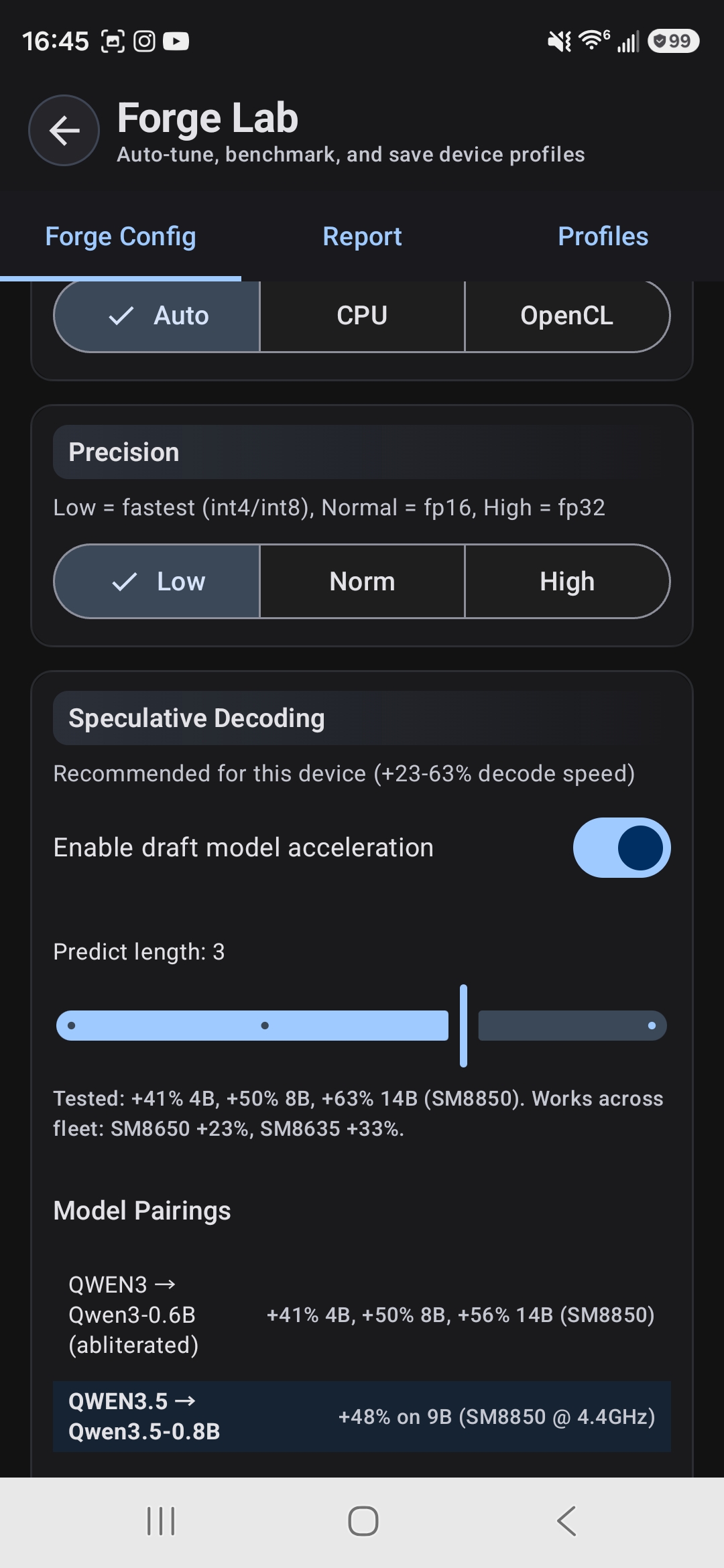

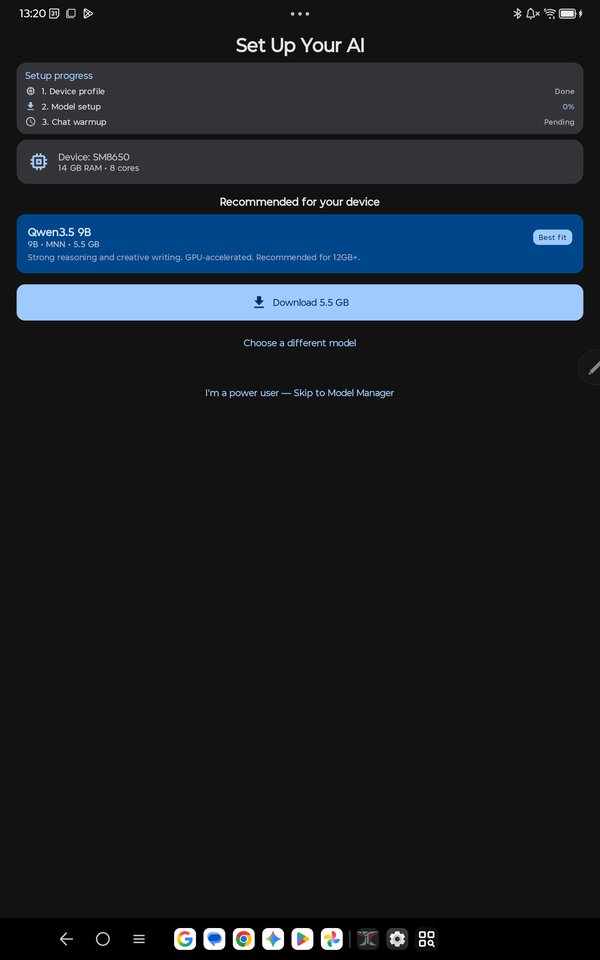

Three inference backends and five GPU paths. TokForge detects your hardware and picks the fastest config automatically — no tuning required.

46–57 tok/s on small models with TQ4 TurboQuant — aggressive GPU quantization that makes lightweight models fly.

Attach PDFs, DOCX, or EPUB files. TokForge summarizes, indexes, and searches them so your AI can answer grounded in your documents — all on-device.

Offline text-to-speech with 11 natural voices and adjustable speed. Powered by Kokoro TTS — no internet, no latency, no data sent anywhere.

Each character gets its own creativity, sampling, and style settings. Your creative writer stays wild while your analyst stays precise.

Private AI conversations, character personas, and on-device benchmarks — all running locally on your phone.

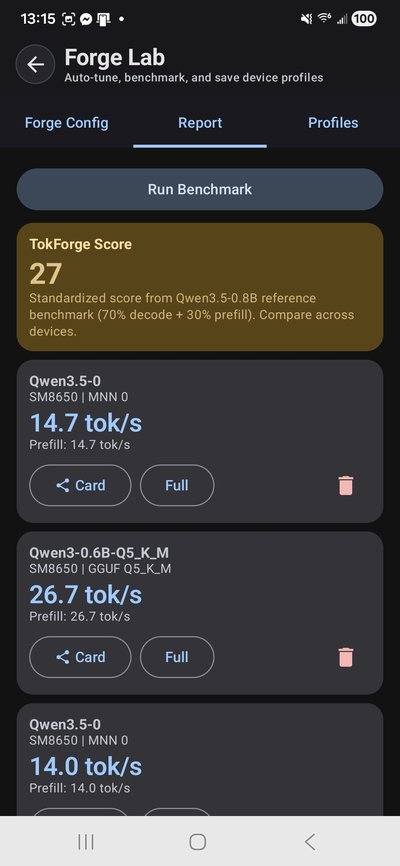

Real devices. Real tok/s. Reproducible configs.

| Device | SoC | Model | tok/s | vs OpenCL | vs CPU |

|---|---|---|---|---|---|

| OnePlus Ace 5 Ultra | D9400 | Qwen3-8B | 11.88 | +56% | +166% |

| OnePlus Ace 5 Ultra | D9400 | Qwen3-14B | 11.22 | N/A | +151% |

MNN Vulkan with tuned NHWC4 GEMV kernels for Mali G925. Verified same-session decode peaks via app benchmark harness. Note: spec decode currently hurts Vulkan — verify batch (M>1) hits slow slide-window path on Mali. Use Vulkan for AR only; OpenCL for spec decode.

| Device | SoC | Model | tok/s | vs CPU |

|---|---|---|---|---|

| OnePlus Ace 5 Ultra | D9400 | 3B Q4_K_M | 16.85 | 3.4x |

| OnePlus Ace 5 Ultra | D9400 | 8B Q4_K_M | 8.07 | ~2x |

GGUF Vulkan with ggml-vulkan cooperative matrix support. Complements MNN Vulkan for quantized GGUF models.

OnePlus Ace 5 Ultra (D9400, ~$400) hits 11.88 tok/s on 8B and 11.22 tok/s on 14B via Vulkan AR — competitive with flagships costing 3–4x more. Samsung's OneUI memory overhead limits what the S26 can run comfortably; the OnePlus runs 14B with headroom.

| Device | Target Model | Baseline | With Spec Decode | Speedup |

|---|---|---|---|---|

| RedMagic 11 Pro | Qwen3-8B | 14.05 tok/s | 23.5 tok/s | +67% |

| RedMagic 11 Pro | Qwen3-14B | 8.25 tok/s | 16.4 tok/s | +99% |

| Lenovo TB520FU | Qwen3-8B | 10.10 tok/s | 10.99 tok/s | +9% |

| OnePlus Ace 5 Ultra | Qwen3-8B | 11.88 tok/s (Vulkan AR) | — | N/A* |

| OnePlus Ace 5 Ultra | Qwen3-14B | 11.22 tok/s (Vulkan AR) | — | N/A* |

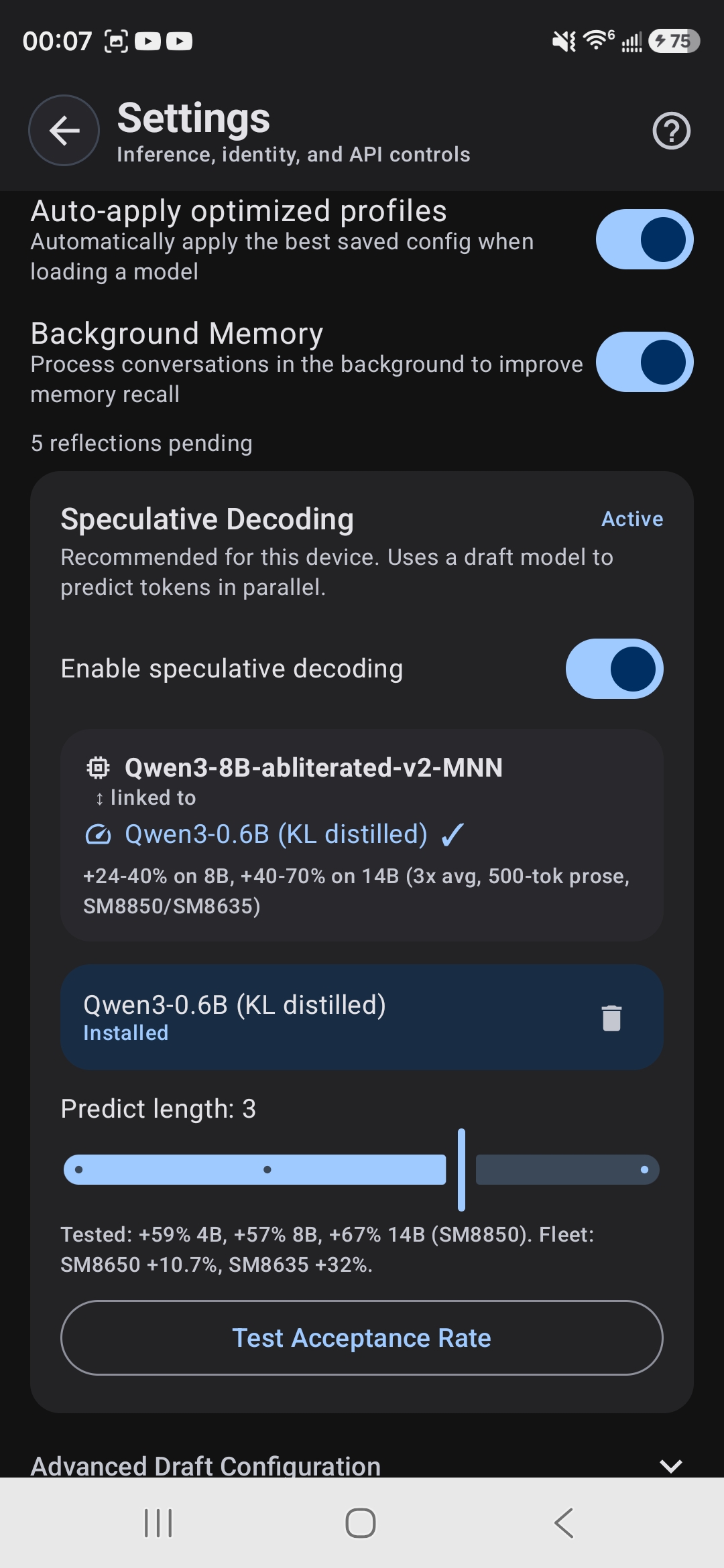

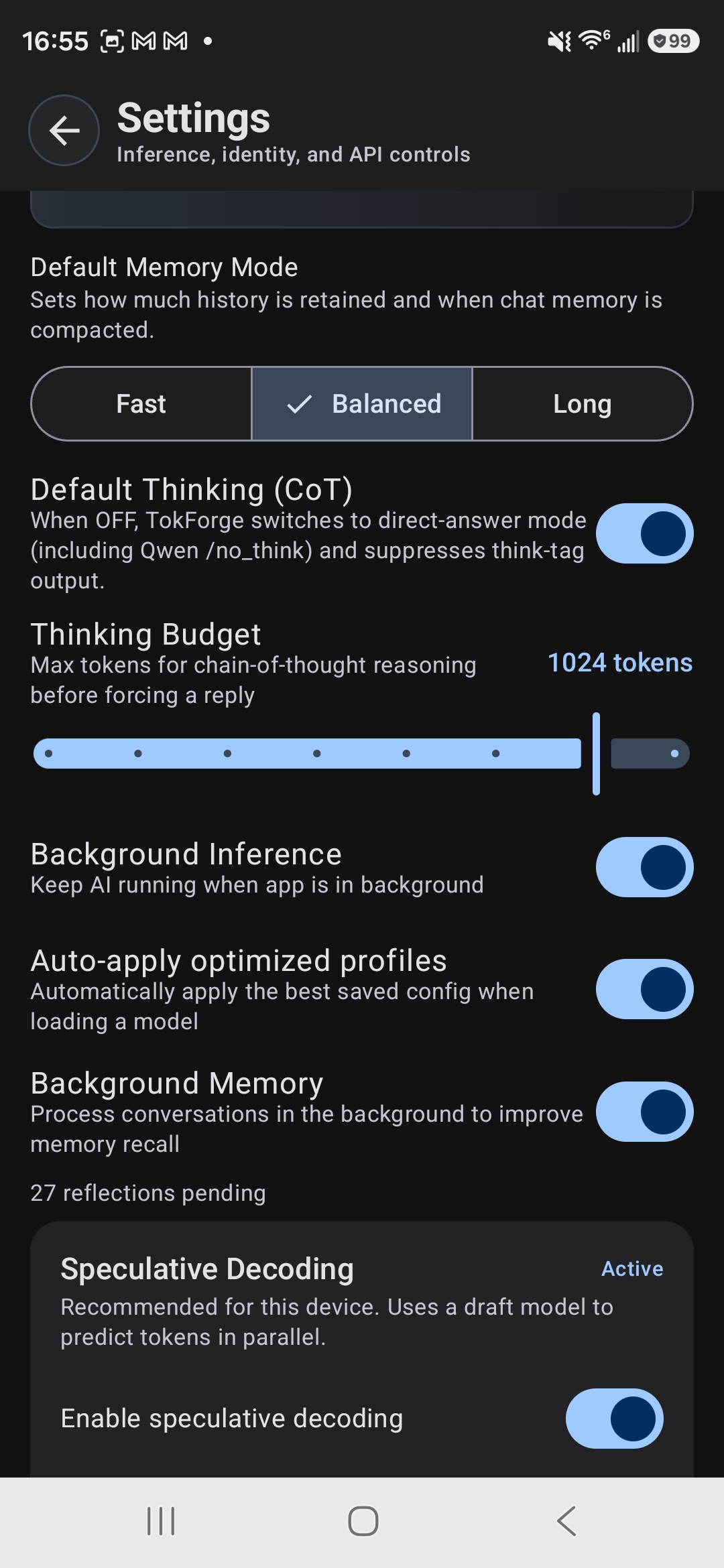

A small draft model proposes candidate tokens; the target model verifies them in a single batched forward pass. SM8850 results are verified single-packet peaks (500 decode tokens). Automatically enabled on supported devices and model pairings.

* Spec decode currently hurts Vulkan performance — verify batch (M>1) falls back to slow slide-window path. D9400 baselines now use faster Vulkan AR-only decode instead.

| Device | SoC | Model | Backend | Decode tok/s |

|---|---|---|---|---|

| Galaxy S26 Ultra | SM8850 | Qwen3-8B | OpenCL | 21.0 |

| RedMagic 11 Pro | SM8850 | Qwen3-4B | OpenCL | 20.68 |

| Galaxy S26 Ultra | SM8850 | Qwen3.5-4B | CPU | 21.30 |

| OnePlus Ace 5 Ultra | D9400 | Qwen3-8B | Vulkan | 11.88 |

| OnePlus Ace 5 Ultra | D9400 | Qwen3-14B | Vulkan | 11.22 |

| RedMagic 11 Pro | SM8850 | Qwen3-8B | OpenCL | 14.05 |

| Galaxy S24 Ultra | SM8650 | Qwen3-4B | OpenCL | 13.58 |

| Xiaomi Pad 7 Pro | SM8635 | Qwen3-4B | CPU | 11.81 |

| Lenovo TB520FU | SM8650 | Qwen3-8B | OpenCL | 10.10 |

BackendCapabilityResolver auto-routes each device: Snapdragon uses MNN OpenCL for standard attention (Qwen3), CPU for linear attention (Qwen3.5). Dimensity 9400 Mali uses MNN Vulkan with tuned NHWC4 GEMV kernels — first production Vulkan LLM on ARM Mali.

| Model | MNN OpenCL | GGUF CPU | MNN Advantage |

|---|---|---|---|

| Qwen3-0.6B | 34.8 | 42.7 | −18% |

| Qwen3-1.7B | 27.4 | 16.3 | +68% |

| Qwen3-4B | 20.68 | 9.0 | +130% |

| Qwen3-8B | 14.05 | 5.4 | +160% |

| Qwen3-14B | 8.25 | 2.7 | +206% |

MNN OpenCL overtakes GGUF CPU at 1.7B+ parameters. At 14B, MNN is 3x faster. GGUF wins only on tiny models (<1B) where CPU overhead is negligible. GGUF uses KleidiAI i8mm + futex barrier threading (2T optimal on Snapdragon 8 Elite).

| Model | Quant | Threads | Decode tok/s | Prefill tok/s |

|---|---|---|---|---|

| Qwen3-0.6B | Q4_K_M | 2T | 42.7 | 113.0 |

| Qwen3-1.7B | Q4_K_M | 2T | 16.3 | 43.9 |

| Llama-3.2-3B | Q4_K_M | 2T | 10.1 | 26.6 |

| Qwen3-4B | Q4_K_M | 2T | 9.0 | 20.7 |

| Qwen3-8B | Q4_K_M | 2T | 5.4 | 12.0 |

| Qwen3-14B | Q4_K_M | 2T | 2.7 | 5.8 |

GGUF uses llama.cpp with KleidiAI i8mm acceleration and futex barrier threading. 2 threads consistently outperforms 4 threads on Snapdragon 8 Elite.

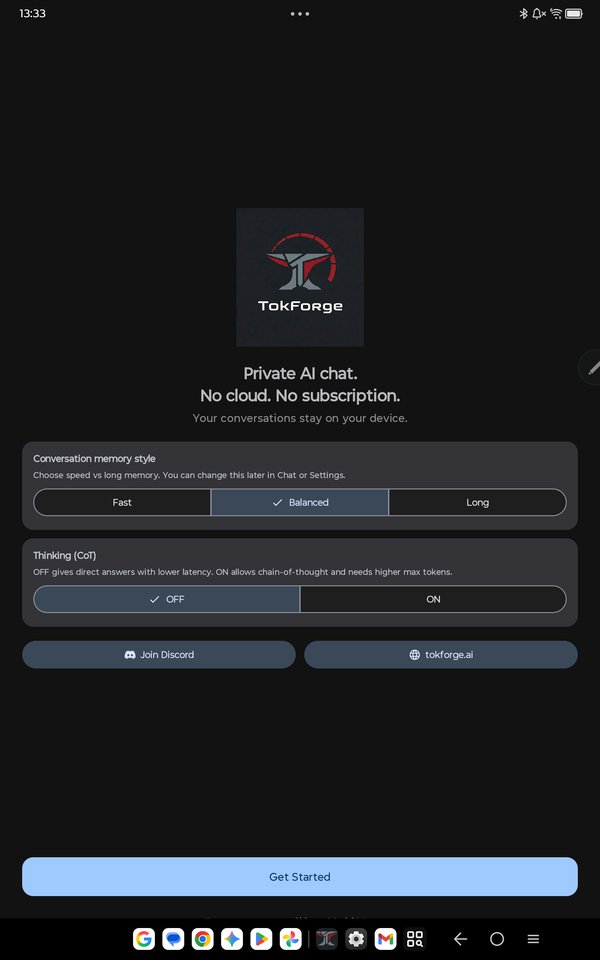

Built for privacy-conscious users, roleplay enthusiasts, and developers who want full control.

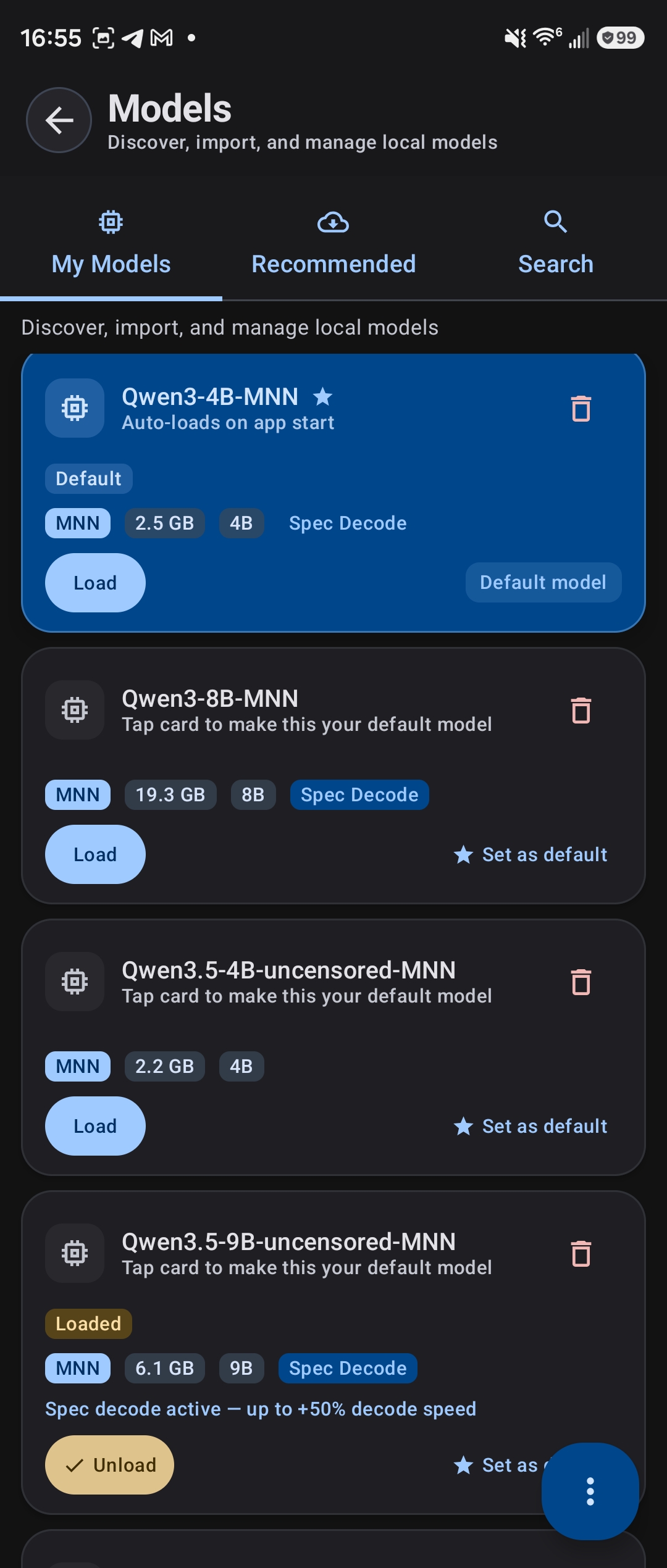

A small draft model predicts ahead, the main model verifies in one pass — nearly doubling speed on large models. 23+ tok/s on 14B. TokForge auto-detects the best model pairings and picks the right GPU path per device.

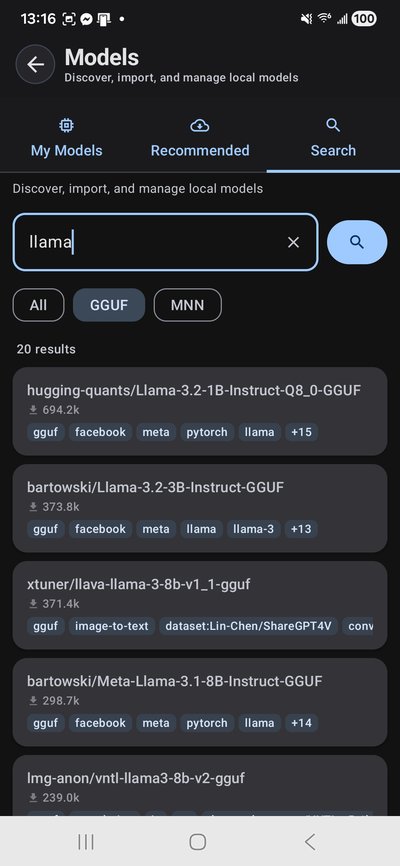

Three inference engines and five GPU acceleration paths. TokForge profiles your hardware on first launch and picks the fastest config — Snapdragon, Dimensity, or Exynos. You can also connect to a remote server for bigger models.

11 natural-sounding voices with adjustable speed, fully offline via Kokoro TTS. Two quality tiers: fast or premium. Voice input too — talk to your AI and hear it respond without ever touching a server.

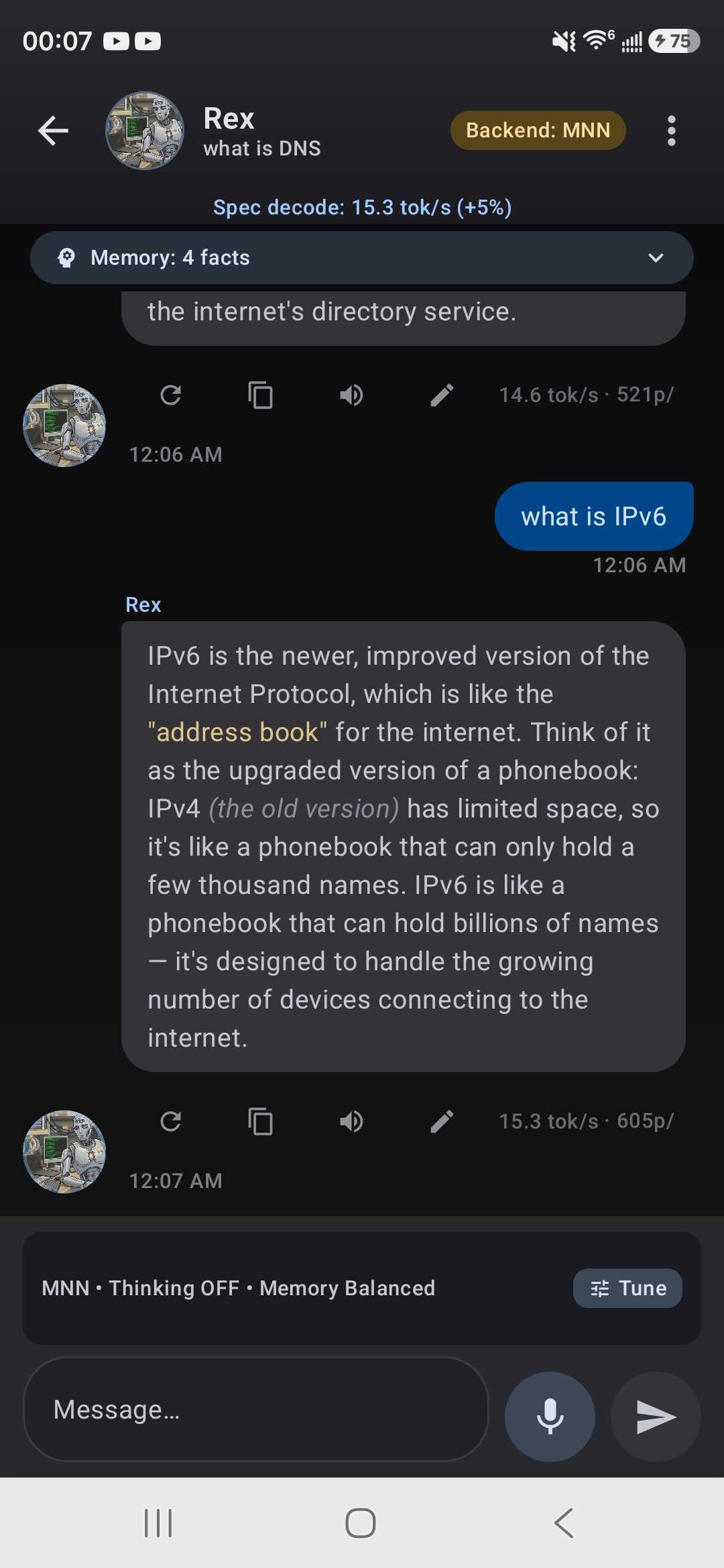

Facts, preferences, and context carry across every conversation. TokForge learns in the background while you chat, building a memory that makes each character feel like it actually knows you.

TQ4 aggressive GPU quantization makes small models absurdly fast — 46–57 tok/s. Two modes trade off quality vs speed. Ideal for quick questions, brainstorming, and real-time back-and-forth where latency matters most.

Attach PDFs, Word docs, EPUBs, or plain text. TokForge indexes and summarizes them, then your AI answers questions grounded in the actual content — all processed on-device, nothing uploaded anywhere.

Chat + inference pipeline

TokForge is free and available on Google Play open testing. Install directly or join the community to help shape the future of private mobile AI.

No telemetry. No background reporting. Your data stays on your device unless you explicitly opt in.